Supervised Learning – Regression (Marketing Analysis Sales Prediction & Automobiles Price Prediction)

Model Evaluation Metrics (R²-score, MSE, MAE, RMSE)

Learning Outcome

5

Evaluate model performance using the R² score.

4

Convert and interpret MSE using Root Mean Squared Error (RMSE).

3

Explain why Mean Squared Error (MSE) penalizes large outliers.

2

Calculate and interpret Mean Absolute Error (MAE).

1

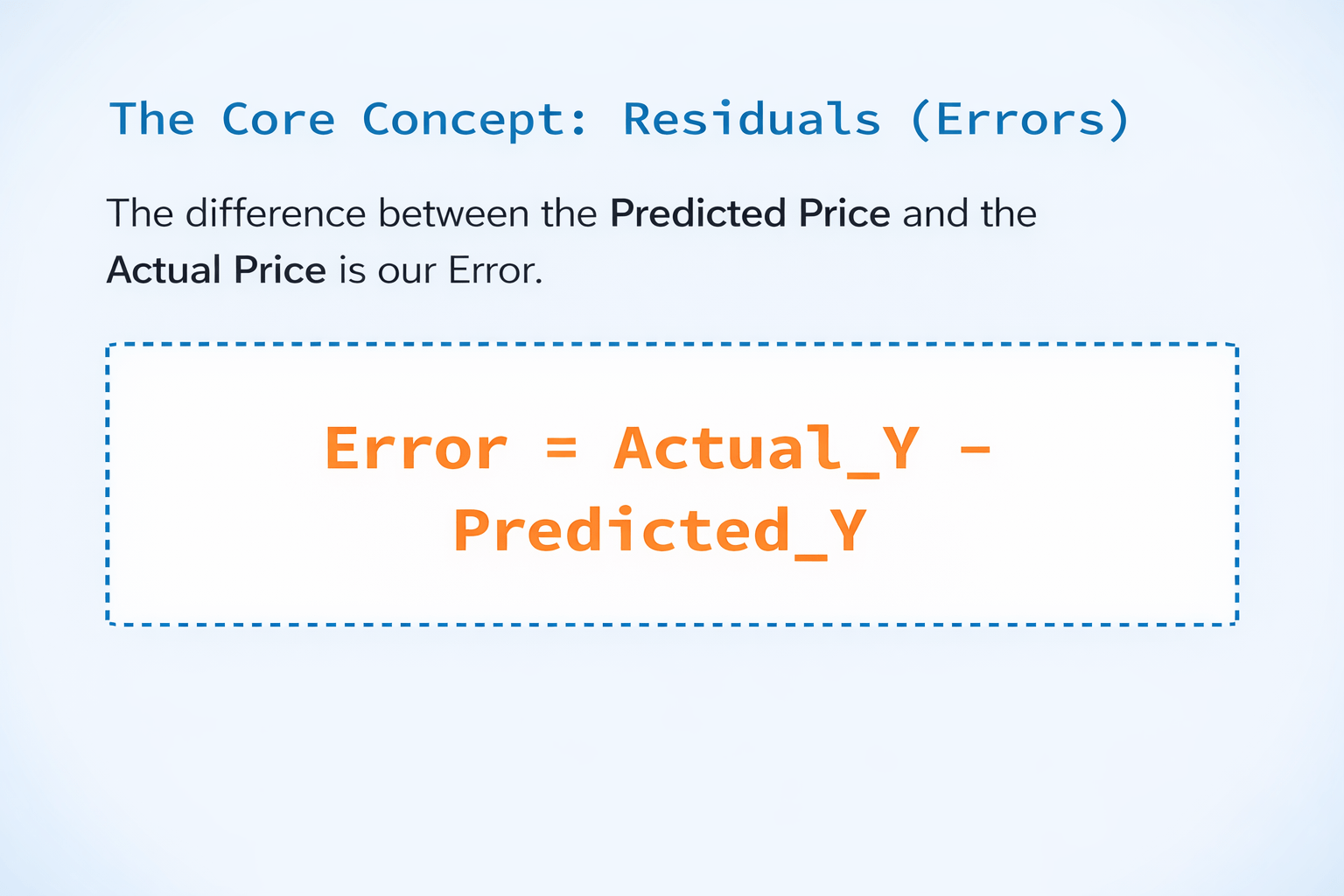

Define a Residual (error) in predictive modeling.

Lets Recall....

The Reality Check

The .predict() function always outputs a number, even if it's a terrible guess.

The Story So Far

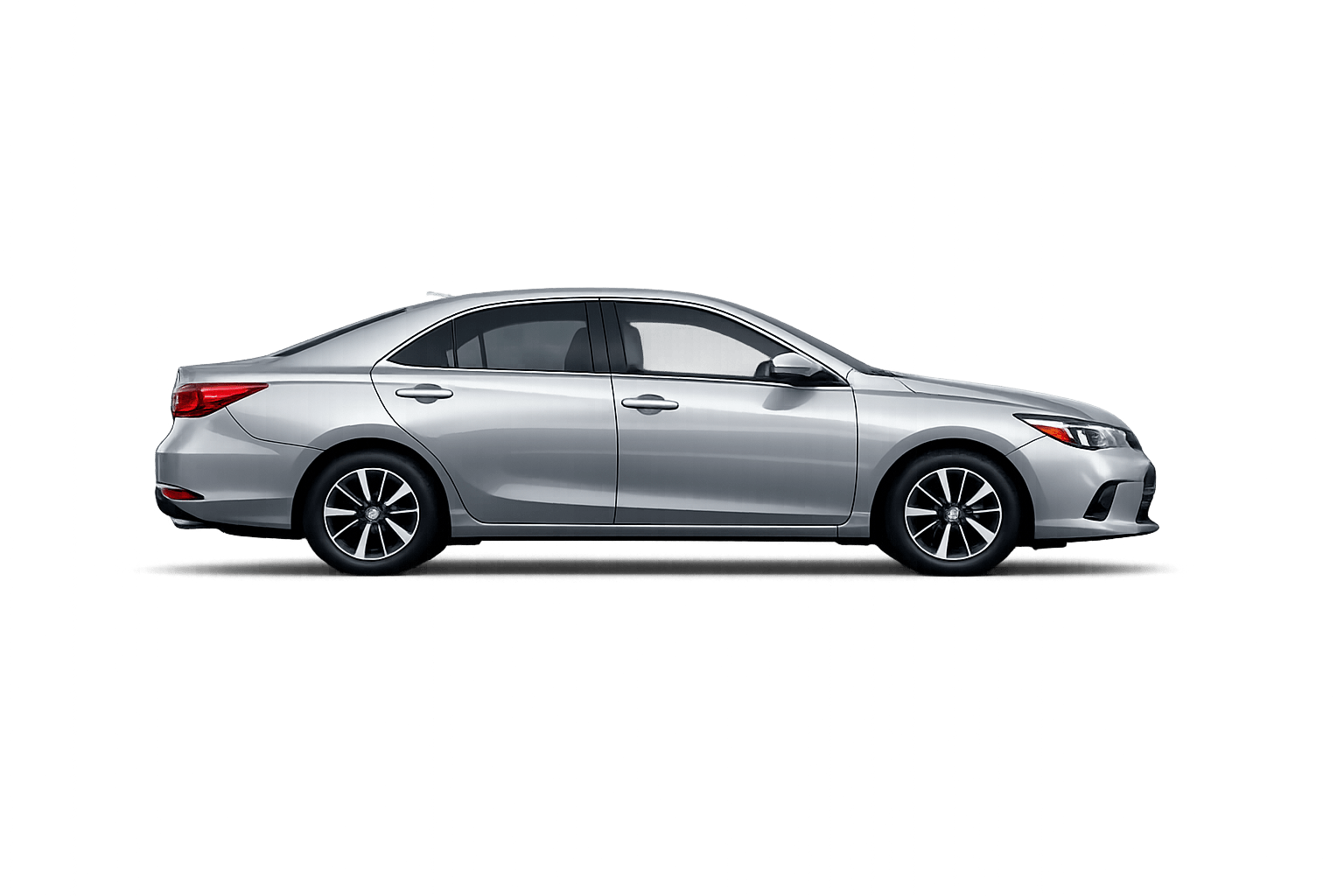

We've built Simple, Multiple, Polynomial, and Regularized regression models to predict car prices.

Lets consider that you hire two car appraisers...

Appraiser A

Predicts standard sedans perfectly

But misses the price of a rare Porsche by 20,000

Appraiser B

Slightly off by 500 on every single car, but never makes a massive, catastrophic mistake

Who is the better appraiser?

Algorithms don't have opinions

We have to use specific mathematical formulas (Metrics) to tell the machine exactly what kind of mistakes we care about most.

Lets understand them in detail....

The Concept

Average distance between predictions and actual values

The Automobile Interpretation

MAE = 1,200

"On average, our model's price prediction is off by $1,200"

The "Everyday" Metric

Most intuitive error measurement

The Formula

MAE = 1/n Σ |Actual - Predicted|

Metric 1: MAE (Mean Absolute Error)

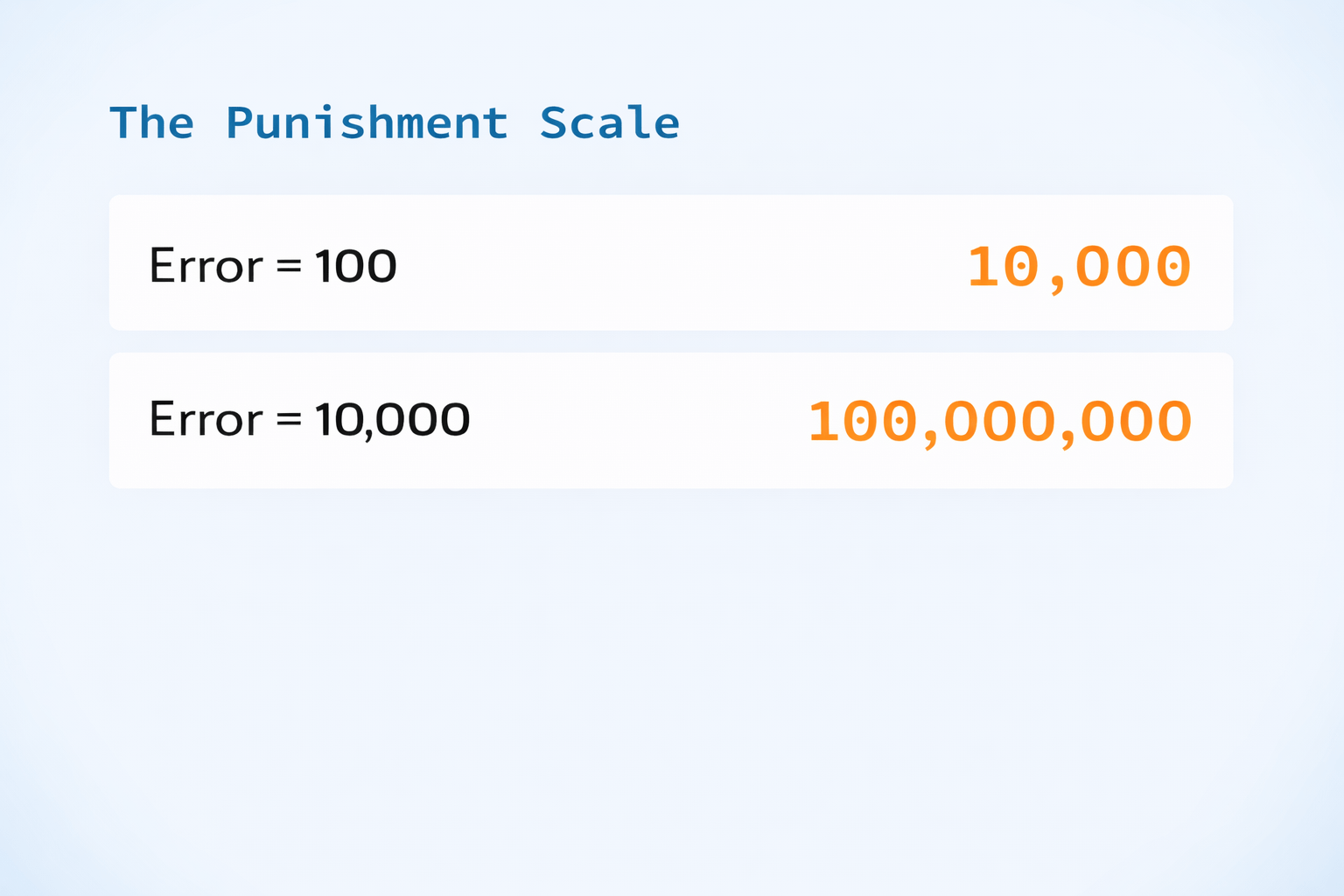

Metric 2: MSE (Mean Squared Error)

The Flaw

Units are "Dollars Squared" — unreadable for humans

The "Strict Punisher" Metric

Most intuitive error measurement

The Formula

MSE = 1/n Σ (Actual - Predicted)²

Why Squaring?

Squaring a large number makes it massive

"Do not make massive mistakes!!"

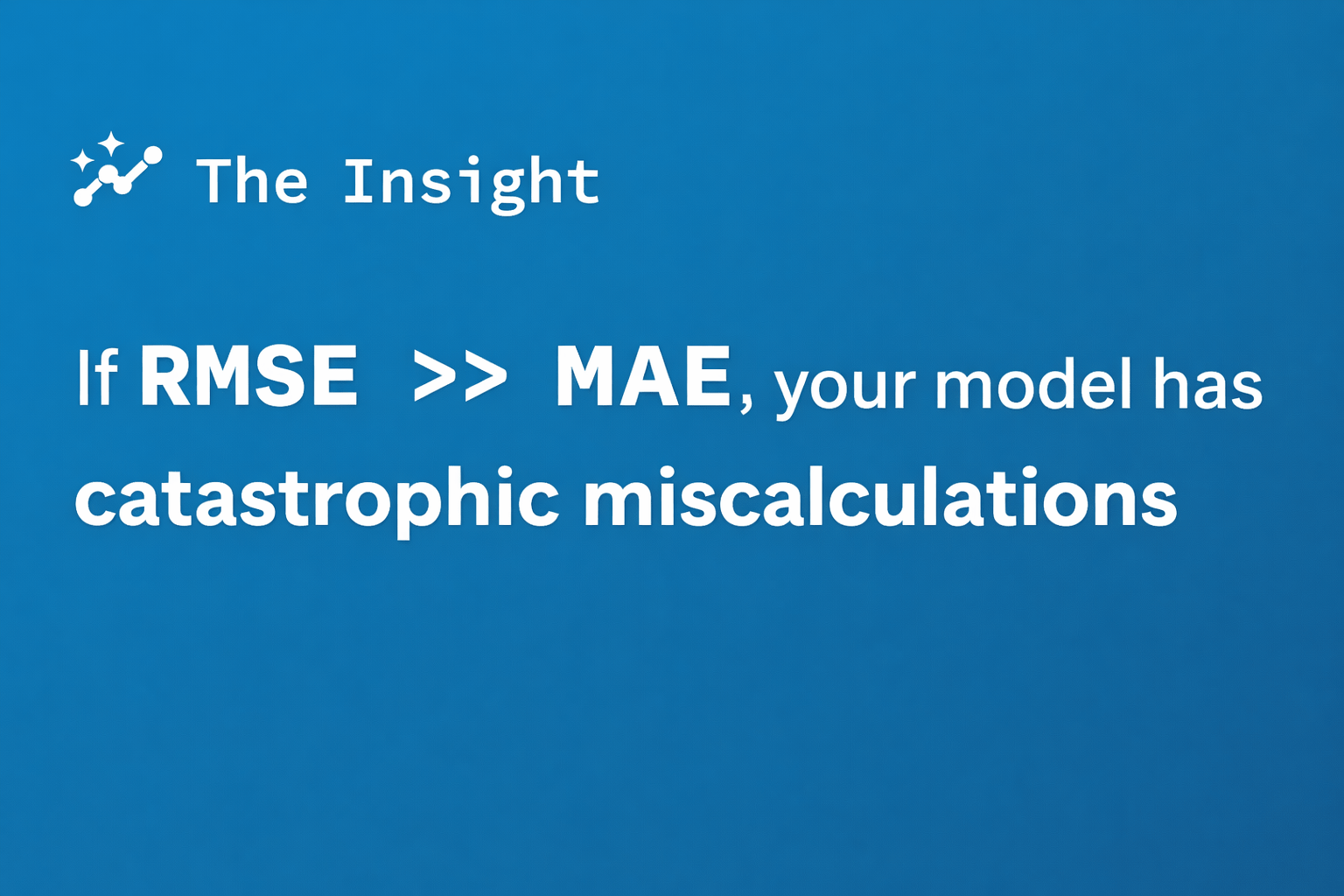

Metric 3: RMSE (Root Mean Squared Error)

Readable units + penalizes large errors

The "Pragmatic" Metric

Best of both worlds

The Formula

RMSE = √MSE

The Fix

Take the square root of MSE to bring units back to standard dollars

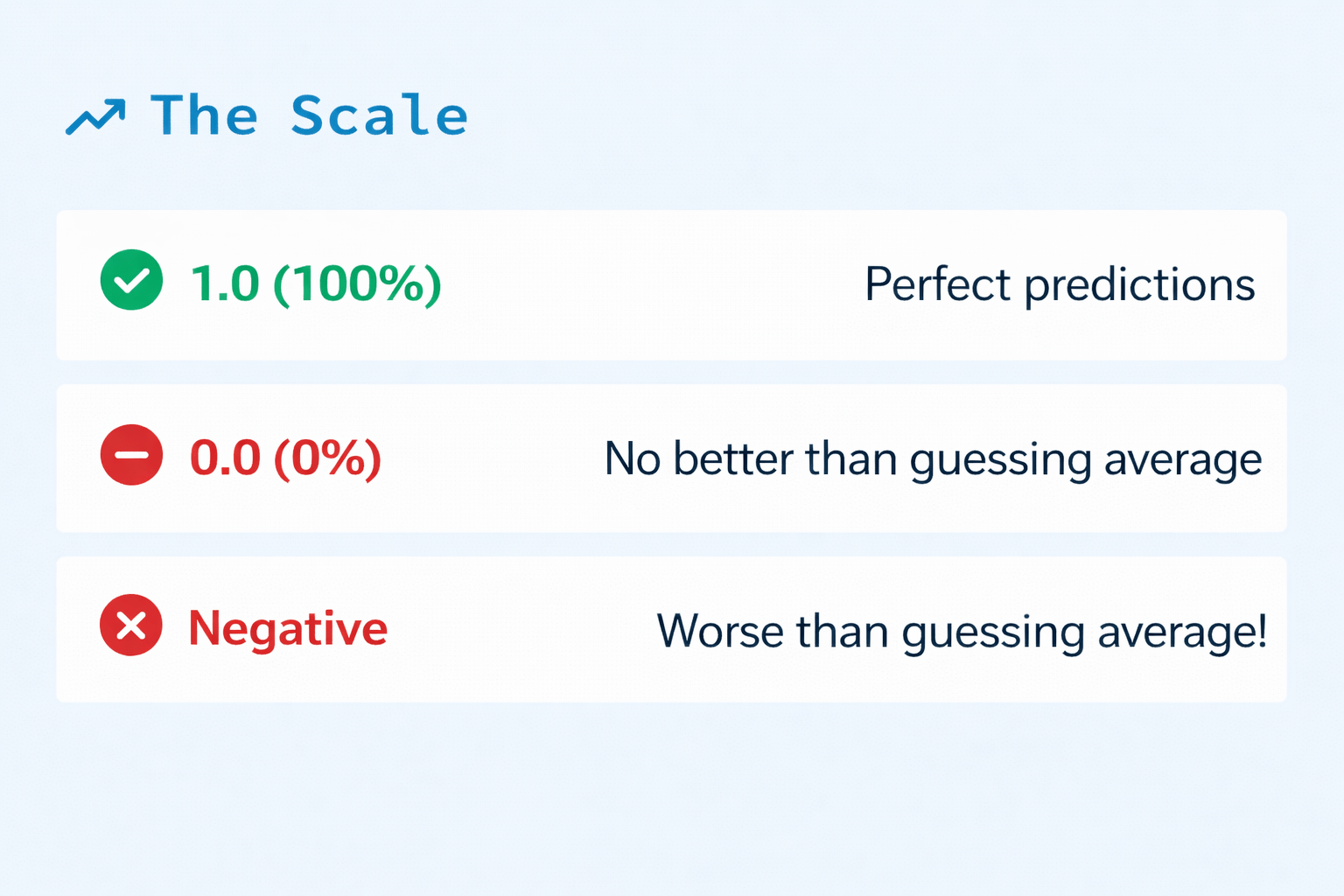

Metric 4: R²-score (Coefficient of Determination)

The Concept

How much better is our model compared to just guessing the "Average Car Price" every single time?

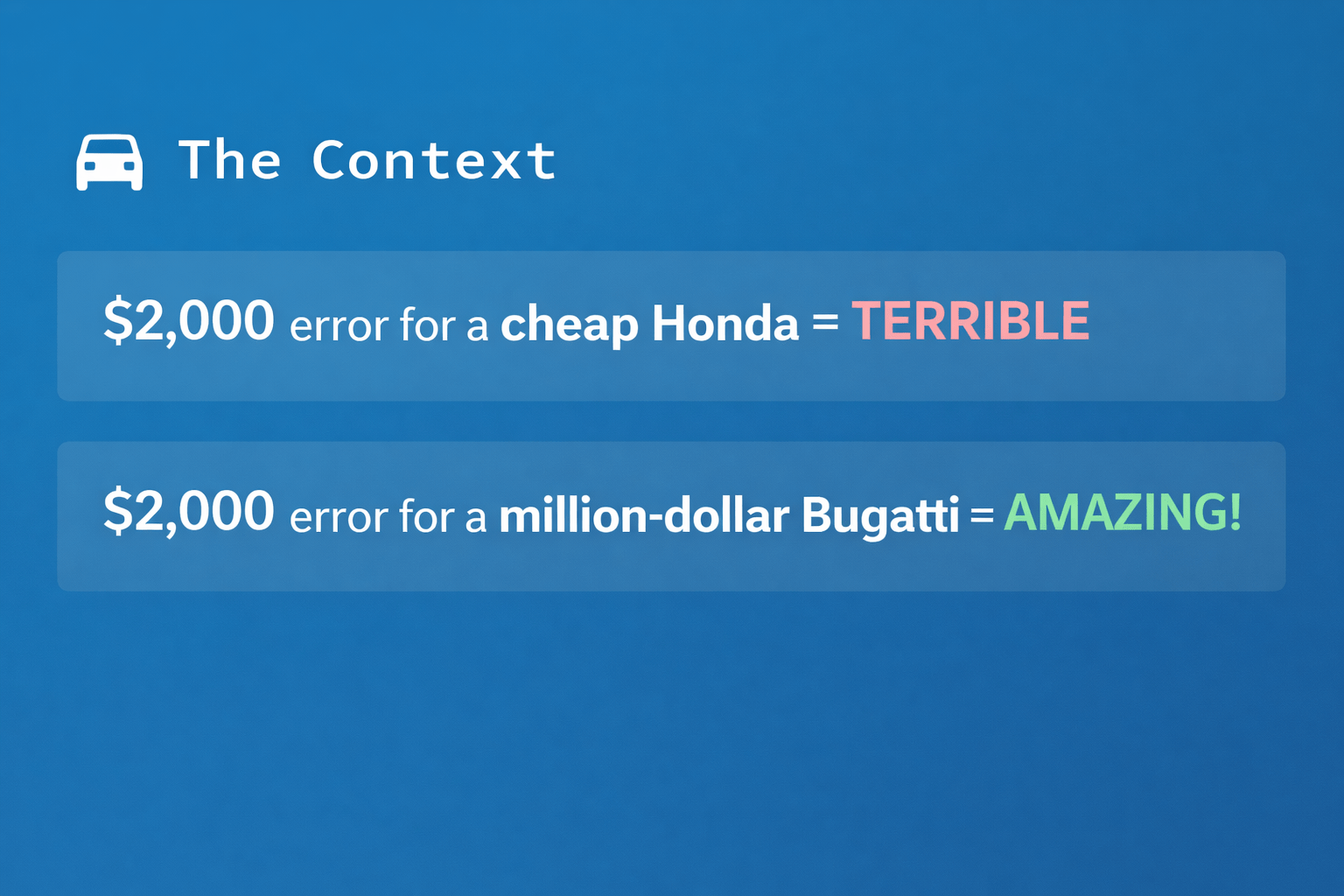

Why R²?

MAE and RMSE tell us error in dollars. But is $2,000 error good or bad?

The "Percentage" Metric

Measures model improvement over average guessing

Which Metric Do I Use?

| Metric | What it tells you? | Best Used When... |

|---|---|---|

| MAE | Average error in actual units (e.g., Dollars). | You want an easy-to-explain number for non-technical clients. |

| MSE | Squared error. Punishes large mistakes heavily. | You are training an algorithm (used as a Cost Function). |

| RMSE | Average error, but sensitive to massive outliers. | You want readable units, but want to ensure large errors are penalized. |

| R²-score | Percentage of variance explained (0 to 1). | You want a universal score to see how well the model "fits" the data overall. |

Summary

5

Use R² to get a universal "percentage" score of your model's quality.

4

Use RMSE if you want to aggressively penalize massive outliers.

3

Use MAE for a simple average error.

2

Residuals are the gap between reality and our AI's prediction.

1

You cannot improve what you cannot measure.

Quiz

If your manager asks, "What percentage of the variation in car prices is our algorithm actually able to explain?", which metric should you give them?

A. MSE

B. R²-score

C. Adjusted Residuals

D. RMSE

Quiz-Answer

If your manager asks, "What percentage of the variation in car prices is our algorithm actually able to explain?", which metric should you give them?

A. MSE

B. R²-score

C. Adjusted Residuals

D. RMSE